CMOS vs CCD

Summary: Difference Between CMOS and CCD is that RAM chips, flash memory chips, and other types of memory chips use complementary metal-oxide semiconductor (CMOS pronounced SEE-moss) technology because it provides high speeds and consumes little power. While CCD also known as Charge-Couple Device which is a light sensitive integrated circuit. CCD is used to show picture’s each element/pixel gets converted into electrical charge and the intensity is related to color in the color spectrum.

CMOS

Some RAM chips, flash memory chips, and other types of memory chips use complementary metal-oxide semiconductor (CMOS pronounced SEE-moss) technology because it provides high speeds and consumes little power. CMOS technology uses battery power to retain information even when the power to the computer is off. Battery-backed CMOS memory chips, for example, can keep the calendar, date, and time current even when the computer is off. The flash memory chips that store a computer’s startup information often use CMOS technology.

CCD

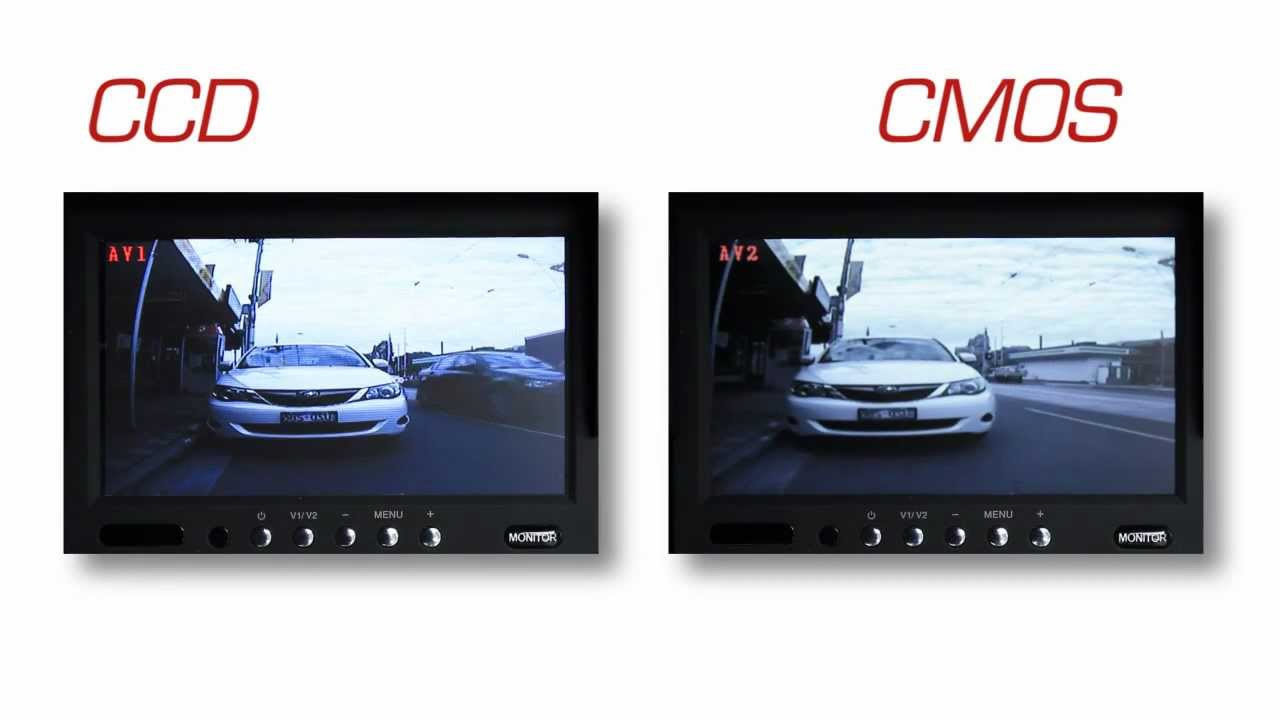

CCD also known as Charge-Couple Device which is a light sensitive integrated circuit. CCD is used to show picture’s each element/pixel gets converted into electrical charge and the intensity is related to color in the color spectrum. Hence in short, CCD’s are used to display data for an image by converting it into electrical charge. While using a system having 65,535 colors there is obviously separate value for each color you can store or recover. While talking about Technology, CCD’s are usually used in Digital Camera’s. The Devices like scanner, bar code reader, and telescopes are also using CCD technology. First CCD was invented at Bell Labs which is now a part of Lucent Technologies. The CCD was invented by Willard Boyle and George Smith in 1969.

Also Read:

Difference Between CMOS and BIOS

Difference Between CMOS and MOS

Difference Between CMOS and MOSFET

Leave a Comment

You must be logged in to post a comment.